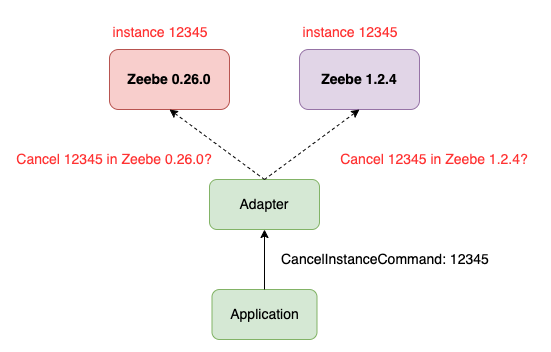

We plan to upgrade Zeebe from 0.26.0 to 1.24.0. Since we can’t perform rolling-upgrade, we have to set up another Zeebe cluster and develop an adapter between our application and Zeebe.

Current key generating rule implies that two different Zeebe cluster may generate same instance key / job key as the key is an auto increment number in each partition.

Related code as follows:

DbKeyGenerator

public final class DbKeyGenerator implements KeyGeneratorControls {

private static final long INITIAL_VALUE = 0;

/**

* Initializes the key state with the corresponding partition id, so that unique keys are

* generated over all partitions.

*

* @param partitionId the partition to determine the key start value

*/

public DbKeyGenerator(

final int partitionId, final ZeebeDb zeebeDb, final TransactionContext transactionContext) {

keyStartValue = Protocol.encodePartitionId(partitionId, INITIAL_VALUE);

nextValueManager =

new NextValueManager(keyStartValue, zeebeDb, transactionContext, ZbColumnFamilies.KEY);

}

@Override

public long nextKey() {

return nextValueManager.getNextValue(LATEST_KEY);

}

}

NextValueManager

public final class NextValueManager {

public long getNextValue(final String key) {

final long previousKey = getCurrentValue(key);

final long nextKey = previousKey + 1;

nextValue.set(nextKey);

nextValueColumnFamily.put(nextValueKey, nextValue);

return nextKey;

}

}

This may cause conflict and ambiguity when our application try to cancel instances or complete jobs as the following figure shows:

So, is there anyway to config the new Zeebe cluster DbKeyGenerator.INITITAL_VALUE?

Such that we can config the new cluster with a rather big key start value to avoid conflict.