I want to archive and then delete completed process instances data from Elasticsearch (used by Camunda Operate).

Based on my analysis, I planned to use an Elasticsearch ILM policy named operate_delete_archived_indices and then apply it to indices like:

operate-list-view-8.3.0_2026-02-19

However, after a fresh Camunda installation via Helm, the expected ILM policy does not seem to be created automatically.

a { text-decoration: none; color: #464feb; } tr th, tr td { border: 1px solid #e6e6e6; } tr th { background-color: #f5f5f5; }

What I expected

- The Helm deployment to create (or wire) an ILM policy for Operate indices, or at least make it clear how to attach a custom ILM policy to indices like

operate-list-view-*. - Ability to archive (e.g., snapshot/export) completed data and then delete indices containing completed instances after a retention period.

a { text-decoration: none; color: #464feb; } tr th, tr td { border: 1px solid #e6e6e6; } tr th { background-color: #f5f5f5; }

What actually happened

- No ILM policy is present after the fresh install (I was expecting something like

operate_delete_archived_indices). - I therefore attempted to create and attach the ILM policy via the Elasticsearch APIs.

a { text-decoration: none; color: #464feb; } tr th, tr td { border: 1px solid #e6e6e6; } tr th { background-color: #f5f5f5; }

What I tried

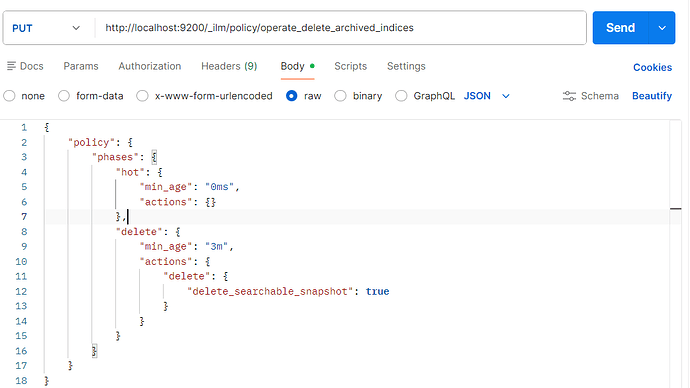

1) Create ILM policy

a { text-decoration: none; color: #464feb; } tr th, tr td { border: 1px solid #e6e6e6; } tr th { background-color: #f5f5f5; }

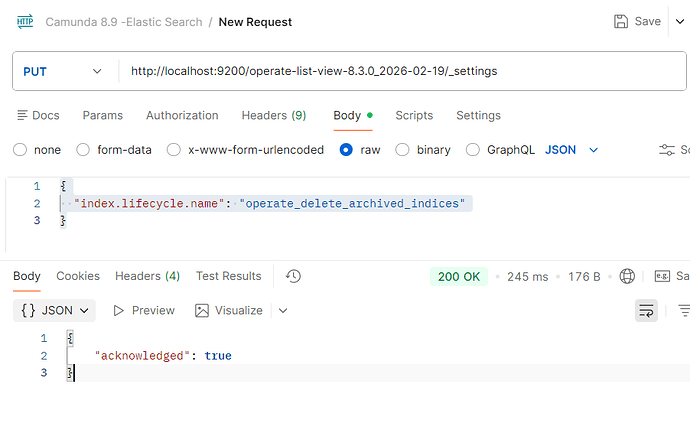

2) Attach ILM policy to the index

Endpoint

camunda-values.yaml :

global:

elasticsearch:

enabled: true

secrets:

autoGenerated: true

name: "camunda-credentials"

identity:

auth:

enabled: true

publicIssuerUrl: "http://localhost:18080/auth/realms/camunda-platform"

webModeler:

redirectUrl: "http://localhost:8070"

console:

redirectUrl: "http://localhost:8087"

optimize:

redirectUrl: "http://localhost:8083"

secret:

existingSecret: "camunda-credentials"

existingSecretKey: "identity-optimize-client-token"

orchestration:

redirectUrl: "http://localhost:8080"

secret:

existingSecret: "camunda-credentials"

existingSecretKey: "identity-orchestration-client-token"

connectors:

secret:

existingSecret: "camunda-credentials"

existingSecretKey: "identity-connectors-client-token"

security:

authentication:

method: oidc

identity:

enabled: true

firstUser:

secret:

existingSecret: "camunda-credentials"

existingSecretKey: "identity-firstuser-password"

identityKeycloak:

enabled: true

postgresql:

auth:

existingSecret: "camunda-credentials"

connectors:

enabled: true

security:

authentication:

method: oidc

oidc:

secret:

existingSecret: "camunda-credentials"

existingSecretKey: "identity-connectors-client-token"

webModeler:

enabled: true

restapi:

mail:

fromAddress: noreply@example.com

webModelerPostgresql:

resources:

requests:

cpu: "50m"

memory: "128Mi"

limits:

cpu: "150m"

memory: "256Mi"

enabled: true

auth:

existingSecret: "camunda-credentials"

secretKeys:

adminPasswordKey: "webmodeler-postgresql-admin-password"

userPasswordKey: "webmodeler-postgresql-user-password"

orchestration:

image:

repository: zeebe-mongodb-exporter

tag: 8.8.11-mongodb-v2

pullPolicy: Never

resources:

requests:

cpu: "500m"

memory: "256Mi"

limits:

cpu: "1"

memory: "1Gi"

enabled: true

clusterSize: "1"

partitionCount: "1"

replicationFactor: "1"

exporters:

mongodb:

className: io.github.camunda8.mongodb.exporter.MongoDBExporter

jarPath: /usr/local/zeebe/exporters/camunda8-mongodb-exporter-1.0-SNAPSHOT.jar

args:

# ⛔ REDACTED: Do not post real credentials/hosts/params

connectionUri: "mongodb+srv://<USERNAME>:<PASSWORD>@<CLUSTER_HOST>/<PARAMS>"

database: "<DB_NAME>"

collection: "<COLLECTION_NAME>"

batchSize: 100

flushInterval: 1000

env:

- name: LOGGING_LEVEL_IO_GITHUB_CAMUNDA8

value: "DEBUG"

# MongoDB Exporter configuration (REDACTED)

- name: ZEEBE_BROKER_EXPORTERS_MONGODB_CLASSNAME

value: io.github.camunda8.mongodb.exporter.MongoDBExporter

- name: ZEEBE_BROKER_EXPORTERS_MONGODB_JARPATH

value: /usr/local/zeebe/exporters/camunda8-mongodb-exporter-1.0-SNAPSHOT.jar

- name: ZEEBE_BROKER_EXPORTERS_MONGODB_ARGS_CONNECTIONURI

value: "mongodb+srv://<USERNAME>:<PASSWORD>@<CLUSTER_HOST>/<PARAMS>"

- name: ZEEBE_BROKER_EXPORTERS_MONGODB_ARGS_DATABASE

value: "<DB_NAME>"

- name: ZEEBE_BROKER_EXPORTERS_MONGODB_ARGS_COLLECTION

value: "<COLLECTION_NAME>"

- name: ZEEBE_BROKER_EXPORTERS_MONGODB_ARGS_BATCHSIZE

value: "100"

- name: ZEEBE_BROKER_EXPORTERS_MONGODB_ARGS_FLUSHINTERVAL

value: "1000"

# CamundaExporter (Elasticsearch) - Archiving Configuration (LOCAL TESTING)

- name: ZEEBE_BROKER_EXPORTERS_CAMUNDAEXPORTER_ARGS_HISTORY_WAITPERIODBEFOREARCHIVING

value: "1m"

- name: ZEEBE_BROKER_EXPORTERS_CAMUNDAEXPORTER_ARGS_HISTORY_ROLLOVERINTERVAL

value: "1h"

- name: ZEEBE_BROKER_EXPORTERS_CAMUNDAEXPORTER_ARGS_HISTORY_ROLLOVERBATCHSIZE

value: "3"

# Retention (LOCAL TESTING - 3 min)

- name: ZEEBE_BROKER_EXPORTERS_CAMUNDAEXPORTER_ARGS_HISTORY_RETENTION_ENABLED

value: "true"

- name: ZEEBE_BROKER_EXPORTERS_CAMUNDAEXPORTER_ARGS_HISTORY_RETENTION_MINIMUMAGE

value: "3m"

- name: ZEEBE_BROKER_EXPORTERS_CAMUNDAEXPORTER_ARGS_HISTORY_RETENTION_APPLYPOLICYJOBINTERVAL

value: "PT2M"

# ── Operate Archiver + Retention ──

- name: CAMUNDA_OPERATE_ARCHIVER_WAITPERIODBEFOREARCHIVING

value: "1m"

- name: CAMUNDA_OPERATE_ARCHIVER_ROLLOVERINTERVAL

value: "1h"

- name: CAMUNDA_OPERATE_ARCHIVER_ROLLOVERBATCHSIZE

value: "3"

- name: CAMUNDA_OPERATE_ARCHIVER_ENABLED

value: "true"

- name: CAMUNDA_OPERATE_ARCHIVER_ILMENABLED

value: "true"

- name: CAMUNDA_OPERATE_ARCHIVER_ILMMINAGEFORDELETEARCHIVEDINDICES

value: "3m"

- name: CAMUNDA_OPERATE_ARCHIVER_RETENTION_ENABLED

value: "true"

- name: CAMUNDA_OPERATE_ARCHIVER_RETENTION_MINIMUMAGE

value: "3m"

- name: CAMUNDA_OPERATE_ARCHIVER_RETENTION_POLICYCHECKINTERVAL

value: "1m"

security:

authentication:

method: oidc

oidc:

redirectUrl: "http://localhost:8080"

secret:

existingSecret: "camunda-credentials"

existingSecretKey: "identity-orchestration-client-token"

console:

enabled: true

image:

repository: camunda/console

tag: "8.8.11"

pullPolicy: IfNotPresent

elasticsearch:

resources:

requests:

cpu: "250m"

memory: "512Mi"

limits:

cpu: "500m"

memory: "2Gi"

enabled: true

master:

replicaCount: 1

resources:

requests:

cpu: "250m"

memory: "512Mi"

limits:

cpu: "500m"

memory: "2Gi"

extraEnvs:

- name: ELASTICSEARCH_HEAP_SIZE

value: "1024m"

persistence:

size: 10Gi

# ── ILM policies + index template for archived indices ──────────────────

lifecycleHooks:

postStart:

exec:

command:

- /bin/bash

- -c

- |

ES="http://localhost:9200"

ILM_BODY='{"policy":{"phases":{"hot":{"min_age":"0ms","actions":{}},"delete":{"min_age":"3m","actions":{"delete":{"delete_searchable_snapshot":true}}}}}}'

TPL_BODY='{"index_patterns":["operate-*_20*","tasklist-*_20*"],"template":{"settings":{"index.lifecycle.name":"operate_delete_archived_indices"}},"priority":100}'

IDX_BODY='{"index.lifecycle.name":"operate_delete_archived_indices"}'

for i in $(seq 1 24); do

curl -sf "$ES/_cluster/health?wait_for_status=yellow&timeout=5s" >/dev/null 2>&1 && break

sleep 5

done

printf '%s' "$ILM_BODY" > /tmp/ilm1.json

curl -s -X PUT "$ES/_ilm/policy/operate_delete_archived_indices" -H "Content-Type: application/json" -d @/tmp/ilm1.json || true

printf '%s' "$ILM_BODY" > /tmp/ilm2.json

curl -s -X PUT "$ES/_ilm/policy/camunda-retention-policy" -H "Content-Type: application/json" -d @/tmp/ilm2.json || true

printf '%s' "$TPL_BODY" > /tmp/tpl.json

curl -s -X PUT "$ES/_index_template/operate-archived-ilm" -H "Content-Type: application/json" -d @/tmp/tpl.json || true

for idx in $(curl -s "$ES/_cat/indices/operate-*_20*,tasklist-*_20*?h=index" 2>/dev/null); do

idx=$(echo "$idx" | tr -d '[:space:]')

[ -z "$idx" ] && continue

printf '%s' "$IDX_BODY" > /tmp/idx.json

curl -s -X PUT "$ES/$idx/_settings" -H "Content-Type: application/json" -d @/tmp/idx.json || true

done

rm -f /tmp/ilm1.json /tmp/ilm2.json /tmp/tpl.json /tmp/idx.json

extraConfig:

logger.org.elasticsearch.deprecation: "OFF"

indices.lifecycle.poll_interval: "10s"