Hi,

We have a Self-Managed Camunda 8.3 Setup done on Open shift.

All the components are configured and also able to deploy the process models through desktop modeler. Now in the process model we have a Rest Outbound Connector and all the process instances are stuck at this node.

On checking the Connector pod logs, I See a timeout issue in connecting to Zeebegateway. Below are the logs.

io.grpc.StatusRuntimeException: UNAVAILABLE: io exception

at io.grpc.Status.asRuntimeException(Status.java:537)

at io.grpc.stub.ClientCalls$StreamObserverToCallListenerAdapter.onClose(ClientCalls.java:481)

at io.grpc.internal.ClientCallImpl.closeObserver(ClientCallImpl.java:574)

at io.grpc.internal.ClientCallImpl.access$300(ClientCallImpl.java:72)

at io.grpc.internal.ClientCallImpl$ClientStreamListenerImpl$1StreamClosed.runInternal(ClientCallImpl.java:742)

at io.grpc.internal.ClientCallImpl$ClientStreamListenerImpl$1StreamClosed.runInContext(ClientCallImpl.java:723)

at io.grpc.internal.ContextRunnable.run(ContextRunnable.java:37)

at io.grpc.internal.SerializingExecutor.run(SerializingExecutor.java:133)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(Unknown Source)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(Unknown Source)

at java.base/java.lang.Thread.run(Unknown Source)

Caused by: io.netty.channel.AbstractChannel$AnnotatedConnectException: finishConnect(…) failed: Connection timed out: ciao-camunda-zeebee-gateway-int06.apps.XXXXXXXXXXXXXXXXXXXX/xxxxxxxxxxxxxxxxxxxxx:26500

Caused by: java.net.ConnectException: finishConnect(…) failed: Connection timed out

at io.netty.channel.unix.Errors.newConnectException0(Errors.java:166)

at io.netty.channel.unix.Errors.handleConnectErrno(Errors.java:131)

at io.netty.channel.unix.Socket.finishConnect(Socket.java:359)

at io.netty.channel.epoll.AbstractEpollChannel$AbstractEpollUnsafe.doFinishConnect(AbstractEpollChannel.java:710)

at io.netty.channel.epoll.AbstractEpollChannel$AbstractEpollUnsafe.finishConnect(AbstractEpollChannel.java:687)

at io.netty.channel.epoll.AbstractEpollChannel$AbstractEpollUnsafe.epollOutReady(AbstractEpollChannel.java:567)

at io.netty.channel.epoll.EpollEventLoop.processReady(EpollEventLoop.java:499)

at io.netty.channel.epoll.EpollEventLoop.run(EpollEventLoop.java:407)

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:997)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

at java.base/java.lang.Thread.run(Unknown Source)

The same gateway host works fine for desktop modeler and also spring boot zeebe client.

I have the exact same issue, did anyone figure it out?

Are connectors supposed to connect over Rest or gRPC?

Hey @deepakkapoor23, let me try to help. Connectors are mainly relying on gRPC connection to receive new jobs from Zeebe. Please let me know which Connectors version you are trying to run, so that I could provide you the right config format to use.

Hi @chillleader - I used this helm chart to setup a local k8s cluster.

camunda-platform-core-kind-values

This has connectors enabled. I am not sure where the versions are mapped but it pulls camunda/connectors-bundle:8.6.8

and in the connectors pod logs I can see this error repeatedly for every connector.

2025-02-21T03:52:38.924Z WARN 1 --- [ult-executor-41] io.camunda.zeebe.client.job.poller : Failed to activate jobs for worker AWS SNS Outbound and job type io.camunda:aws-sns:1

io.grpc.StatusRuntimeException: UNAVAILABLE: io exception

at io.grpc.Status.asRuntimeException(Status.java:533)

at io.grpc.stub.ClientCalls$StreamObserverToCallListenerAdapter.onClose(ClientCalls.java:481)

at io.grpc.internal.ClientCallImpl.closeObserver(ClientCallImpl.java:564)

at io.grpc.internal.ClientCallImpl.access$100(ClientCallImpl.java:72)

at io.grpc.internal.ClientCallImpl$ClientStreamListenerImpl$1StreamClosed.runInternal(ClientCallImpl.java:729)

at io.grpc.internal.ClientCallImpl$ClientStreamListenerImpl$1StreamClosed.runInContext(ClientCallImpl.java:710)

at io.grpc.internal.ContextRunnable.run(ContextRunnable.java:37)

at io.grpc.internal.SerializingExecutor.run(SerializingExecutor.java:133)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(Unknown Source)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(Unknown Source)

at java.base/java.lang.Thread.run(Unknown Source)

Caused by: io.netty.channel.AbstractChannel$AnnotatedConnectException: finishConnect(..) failed: Connection refused: camunda-platform-zeebe-gateway/10.96.199.141:26500

Caused by: java.net.ConnectException: finishConnect(..) failed: Connection refused

at io.netty.channel.unix.Errors.newConnectException0(Errors.java:166)

at io.netty.channel.unix.Errors.handleConnectErrno(Errors.java:131)

at io.netty.channel.unix.Socket.finishConnect(Socket.java:359)

at io.netty.channel.epoll.AbstractEpollChannel$AbstractEpollUnsafe.doFinishConnect(AbstractEpollChannel.java:715)

at io.netty.channel.epoll.AbstractEpollChannel$AbstractEpollUnsafe.finishConnect(AbstractEpollChannel.java:692)

at io.netty.channel.epoll.AbstractEpollChannel$AbstractEpollUnsafe.epollOutReady(AbstractEpollChannel.java:567)

at io.netty.channel.epoll.EpollEventLoop.processReady(EpollEventLoop.java:491)

at io.netty.channel.epoll.EpollEventLoop.run(EpollEventLoop.java:399)

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:997)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

at java.base/java.lang.Thread.run(Unknown Source)

I am pretty sure I have camunda-platform-zeebe-gateway running on port 26500 because I am able to connect to it via springboot app and deploy processes and execute workers.

on a related note, I want to be able to use inbound connectors (kafaka) and it seems this kind chart disables inbound.

connectors:

enabled: true

inbound:

mode: disabled

I tried enabling it by using credentials mode and setting secrets but I cant find a good example in documentation on how to setup identity and keycloak servies. Can you please help point me to an example or a helm chart I can use as is with all the components which will run fine on a kind control plane? Thanks!

Your error is a bit different from the OP’s

Connection Refused means that the address that you were trying to connect to is not accepting the connection.

Unfortunately, I cannot tell you why your host camunda-platform-zeebe-gateway refused the connection.

@chillleader I noticed this error in the log for outbound connectors only, not for inbound. How can I tell if inbound connectors are even running?

I tried to run the Helm chart using kind and this values file. The zeebe-gateway container was failing for me due to an OOM error, so I increased the resource limits in the values file:

zeebeGateway:

replicas: 1

resources:

limits:

cpu: 500m

memory: 1Gi

With this change, the cluster started successfully and turned healthy in a couple of minutes, and the connectors log showed no errors. Can you check if you have a similar issue?

The default Kind values file disables inbound connectors. If inbound connectors are enabled, the connectors pod will expose an endpoint (/inbound) that returns information about active inbound connectors. If inbound connectors are disabled, the endpoint will return 404.

For more info about connectors params in the Helm chart, see this page.

If you want to run a setup with inbound connectors enabled, check the default values.yaml - the Helm chart starts with inbound connectors active by default if you don’t use the Kind values file.

Thanks @chillleader

I cant recall what I changed in the heml values but this is no longer an issue. I didnt have to increase resource limits. And yes the inbound connectors are running in the bundle.

Could you show please your whole helm charts? You replace sensible infos with placeholders.

The Helm charts have already undergone significant changes, and 8.3 is no longer maintained; thus I no longer have the setup discussed in this topic.

Can you please describe your issue and your setup details @Maurice_Tchangue?

I still getting “Connection refused“ error from connectors to zeebe gateway

io.grpc.StatusRuntimeException: UNAVAILABLE: io exception

at io.grpc.Status.asRuntimeException(Status.java:532)

at io.grpc.stub.ClientCalls$StreamObserverToCallListenerAdapter.onClose(ClientCalls.java:581)

at io.grpc.internal.ClientCallImpl.closeObserver(ClientCallImpl.java:566)

at io.grpc.internal.ClientCallImpl.access$100(ClientCallImpl.java:72)

at io.grpc.internal.ClientCallImpl$ClientStreamListenerImpl$1StreamClosed.runInternal(ClientCallImpl.java:734)

at io.grpc.internal.ClientCallImpl$ClientStreamListenerImpl$1StreamClosed.runInContext(ClientCallImpl.java:715)

at io.grpc.internal.ContextRunnable.run(ContextRunnable.java:37)

at io.grpc.internal.SerializingExecutor.run(SerializingExecutor.java:133)

at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(Unknown Source)

at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(Unknown Source)

at java.base/java.lang.Thread.run(Unknown Source)

Caused by: io.netty.channel.AbstractChannel$AnnotatedConnectException: finishConnect(..) failed with error(-111): Connection refused: camunda-zeebe-gateway/172.20.87.85:26500

Caused by: java.net.ConnectException: finishConnect(..) failed with error(-111): Connection refused

at io.netty.channel.unix.Errors.newConnectException0(Errors.java:166)

at io.netty.channel.unix.Errors.handleConnectErrno(Errors.java:131)

at io.netty.channel.unix.Socket.finishConnect(Socket.java:359)

at io.netty.channel.epoll.AbstractEpollChannel$AbstractEpollUnsafe.doFinishConnect(AbstractEpollChannel.java:715)

at io.netty.channel.epoll.AbstractEpollChannel$AbstractEpollUnsafe.finishConnect(AbstractEpollChannel.java:692)

at io.netty.channel.epoll.AbstractEpollChannel$AbstractEpollUnsafe.epollOutReady(AbstractEpollChannel.java:567)

at io.netty.channel.epoll.EpollEventLoop.processReady(EpollEventLoop.java:491)

at io.netty.channel.epoll.EpollEventLoop.run(EpollEventLoop.java:399)

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:998)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

at java.base/java.lang.Thread.run(Unknown Source)

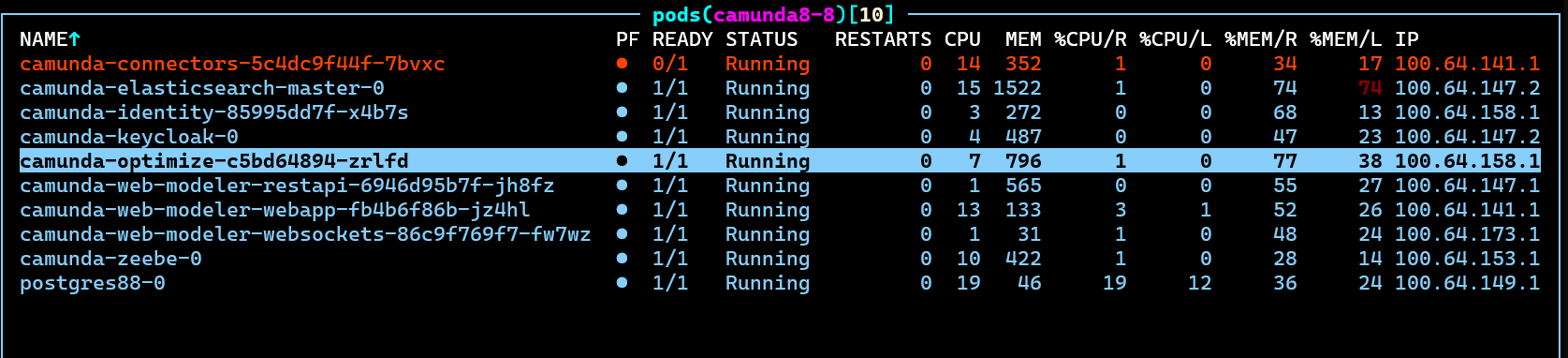

Here are some commands and their corresponding outputs

$ kubectl get endpoints camunda-zeebe-gateway -n camunda8-8

Warning: v1 Endpoints is deprecated in v1.33+; use discovery.k8s.io/v1 EndpointSlice

NAME ENDPOINTS AGE

camunda-zeebe-gateway 100.64.153.148:26500,100.64.153.148:9600,100.64.153.148:8080 100m

And here a screenshot from my current deployment

Which Camunda platform version are you running and how did you set up your deployment? Did you encounter this issue when setting up a fresh environment using our official Helm charts, or is it a result of a version upgrade / modification to your existing environment?

Here is the command I use to install camunda:

helm install camunda camunda/camunda-platform -f camunda8-values.yaml -n camunda8-8 --version 13.1.1

Could you share your `camunda8-values.yaml` as well? Feel free to cut out the sensitive stuff ofc. Also, please try using the latest version 13.4.2.