Hi,

I have to model the following use case. A daily batch job scans a db table and pushes each record for further processing. Number of records is in the order of 100 of millions.

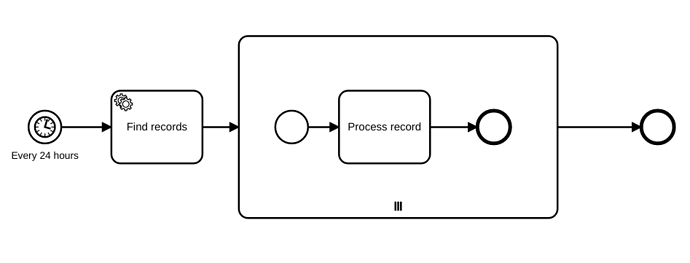

One way I could think of modeling it, is using a Timer Start event to start the job. The problem is what to do afterwards, i.e. how to split the initial token that kicks in the job into multiple, one per record (each token independent of the other). I thought on using a Multi Instance activity, but will it work? The resultset that scans the repository is huge and doesn’t fit in memory. But here I might be missing something on Camunda. Attached a simplified version of the flow

An alternative is to have the batch job outside Camunda so that it pushes individual records to Camunda. That would work but I’d like to have the job inside the flow as it has some logic.

Could someone shed some light if I am on the right direction?

Thanks in advance,