The data returned (Member List) from a service task (Fetch Data) that contains the collection data for the multi instance sub process (Member Salary Update) is to big to fit into a process variable. What is the best way to work around this issue?

Start a new process instance for each element, i.e. do not use multi instance feature. Or save the results somewhere and process them in a batch manner. Your requirements will show the way!

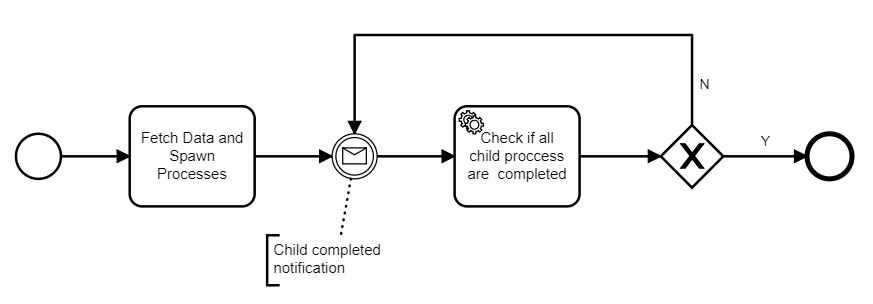

Thanks for feedback but this will lead to the below model - which I was trying to avoid:

Problems are:

- No visibility of child processes in the parent.

- Child notification will get lost while in the “Check if all child processes are completed” service task.

Do you perhaps have a suggestion on how to improve on the above pattern?

Your problems seem to be a design smell to me. Are you sure you must process the mass data as a process? What is the “process” here?

You could do the following:

- Fetch the data set and save it somewhere (DB?) Assign an ID to the data set.

- Start a batch that would process the data set. This even might be an activity of type “external task” – that way you’d stay in the context of your process.

- At the end of the batch, send a “completed” message to the process.

IMO business processes is not the right tool for mass data processing.

What you are suggesting is exactly what i am trying to achieve with my first model at the top: using camunda external tasks as a ‘batching mechanism’. But instead of processing ALL in a single worker I want to create/register an external task per member update. The limitation in camunda (modeler?) is that i need to provide a process variable containing the collection data to iterate over to create the multi-instance sub process and this collection is quite big and does not fit in a process variable. To overcome this is there a way to call a service from within a multi-instance collection reference: ${call-some-service-to-get-data-instead-of-using-process-variable}?

What do you mean by “does not fit in a process variable”? Java collections can hold any number of elements. Where exactly does the error occur? The only place I can think of is the serialized variable which is stored in the camunda DB. This might put a limitation on the size.

Why do you want to process the elements individually?

Hi @gio, what was your eventual solution to working with a huge array to go with parallel multi-instance? I have a similar use case where I may even encounter a few million records and trying to figure out the best way to tackle that.

Hi,

I am interested in an answer to this post as well. I would like to let the users process an arbitrarily big number of records (and each record can in turn also hold arbitrarily big data), and then I cannot guarantee that the full collection can fit.

In my case as well, the collection has to be provided by a ServiceTask.

I was thinking of a paging mechanism, through which Camunda would invoke the ServiceTask to get a chunk of, for instance 100 records, and would invoke it once per 100-record pages.

Is this doable with Camunda?

Many thanks!

Hi @bfredo123,

you can use nested multi instance (Multi-instance subprocess contains multi-instance service task) to keep the numbers of a single multi-instance task smaller.

Hope this helps, Ingo

Thanks @Ingo_Richtsmeier, this could work, but actually I would prefer an approach where the user does not have to worry about the technical restrictions of the system, and have a workflow definition independent from the volume of data. - if at all possible.

Best regards

Hello,

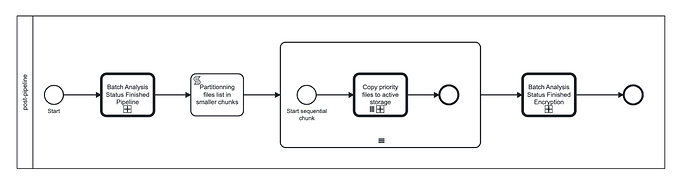

We’ve ran into a similar issue when running a 20 000 multi instance subprocess.

The execution tree was taking all CPU/Memory available up to pod crash in OOM.

Long term we’ll stop using subprocesses with this kind of volume but in the meantime we’ve partitioned the list of items into smaller chunks of 1000 items.

We then run in parallel those 1000 items and the chunks are runs sequentially. This completely solved our performance issue (CPU/Memory/Cockpit totally unavailable)

Script looks like that:

var files = execution.getVariable("priorFiles");

var lists = files.collate(1000)

println("Partitionned list of " + files.size() + " files into " + lists.size() + " lists of 1000 files")

return lists