Hi @dscheinin,

I could reproduce your problem that the async continuation is not picked up after a server restart.

What I did:

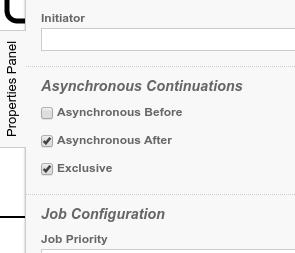

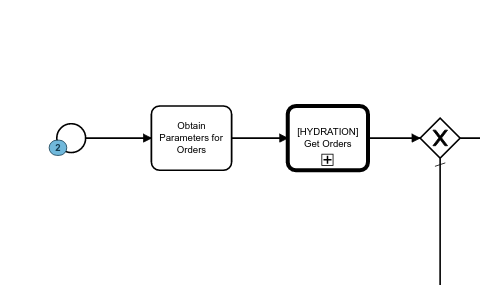

- Modeled a process instance with Asynchronous After on the start event

- Deployed it with the rest api to the prepackaged shared process engine running on tomcat.

- Start a process instance which works fine.

- Restart the tomcat server

- Start another process instance. This get stucked in the job.

Then I diagnosed the problem as described by Thorben here: https://blog.camunda.com/post/2019/10/job-executor-what-is-going-on-in-my-process-engine/.

The job is created in the database with a deployment id of d7eeb0ec-7a5e-11ea-ac1d-3ce1a1c19785.

After adding debug output for the job acquisition, I found this snippet in the log:

09-Apr-2020 14:56:46.207 FEIN [Thread-5] org.camunda.commons.logging.BaseLogger.logDebug ENGINE-13005 Starting command -------------------- AcquireJobsCmd ----------------------

09-Apr-2020 14:56:46.207 FEIN [Thread-5] org.camunda.commons.logging.BaseLogger.logDebug ENGINE-13009 opening new command context

09-Apr-2020 14:56:46.209 FEIN [Thread-5] org.apache.ibatis.logging.jdbc.BaseJdbcLogger.debug ==> Preparing: select RES.ID_, RES.REV_, RES.DUEDATE_, RES.PROCESS_INSTANCE_ID_, RES.EXCLUSIVE_ from ACT_RU_JOB RES where (RES.RETRIES_ > 0) and ( RES.DUEDATE_ is null or RES.DUEDATE_ <= ? ) and (RES.LOCK_OWNER_ is null or RES.LOCK_EXP_TIME_ < ?) and RES.SUSPENSION_STATE_ = 1 and (RES.DEPLOYMENT_ID_ is null or ( RES.DEPLOYMENT_ID_ IN ( ? , ? ) ) ) and ( ( RES.EXCLUSIVE_ = 1 and not exists( select J2.ID_ from ACT_RU_JOB J2 where J2.PROCESS_INSTANCE_ID_ = RES.PROCESS_INSTANCE_ID_ -- from the same proc. inst. and (J2.EXCLUSIVE_ = 1) -- also exclusive and (J2.LOCK_OWNER_ is not null and J2.LOCK_EXP_TIME_ >= ?) -- in progress ) ) or RES.EXCLUSIVE_ = 0 ) LIMIT ? OFFSET ?

09-Apr-2020 14:56:46.210 FEIN [Thread-5] org.apache.ibatis.logging.jdbc.BaseJdbcLogger.debug ==> Parameters: 2020-04-09 14:56:46.207(Timestamp), 2020-04-09 14:56:46.207(Timestamp), b2ae552f-6ad1-11ea-8375-3ce1a1c19785(String), b2e62e19-6ad1-11ea-8375-3ce1a1c19785(String), 2020-04-09 14:56:46.207(Timestamp), 3(Integer), 0(Integer)

09-Apr-2020 14:56:46.211 FEIN [Thread-5] org.apache.ibatis.logging.jdbc.BaseJdbcLogger.debug <== Total: 0

09-Apr-2020 14:56:46.211 FEIN [Thread-5] org.camunda.commons.logging.BaseLogger.logDebug ENGINE-13011 closing existing command context

09-Apr-2020 14:56:46.212 FEIN [Thread-5] org.camunda.commons.logging.BaseLogger.logDebug ENGINE-13006 Finishing command -------------------- AcquireJobsCmd ----------------------

The crucial part of the query is and (RES.DEPLOYMENT_ID_ is null or ( RES.DEPLOYMENT_ID_ IN ( ? , ? ) ) ) with the parameters b2ae552f-6ad1-11ea-8375-3ce1a1c19785 and b2e62e19-6ad1-11ea-8375-3ce1a1c19785 which didn’t match the original one from above.

To overcome this issue, you could either use a different deployment model and deploy new process models with redeploying a process application as a war file: https://docs.camunda.org/get-started/java-process-app/

Or change the setting in the bpm-platform.xml for the job executor:

<property name="jobExecutorDeploymentAware">false</property>

But be aware that this has other implications on heterogenous cluster setup and process applications.

Hope this helps, Ingo